Introducing Command R+: Our new, most powerful model in the Command R family.

< Back to blog

Jul 22, 2022

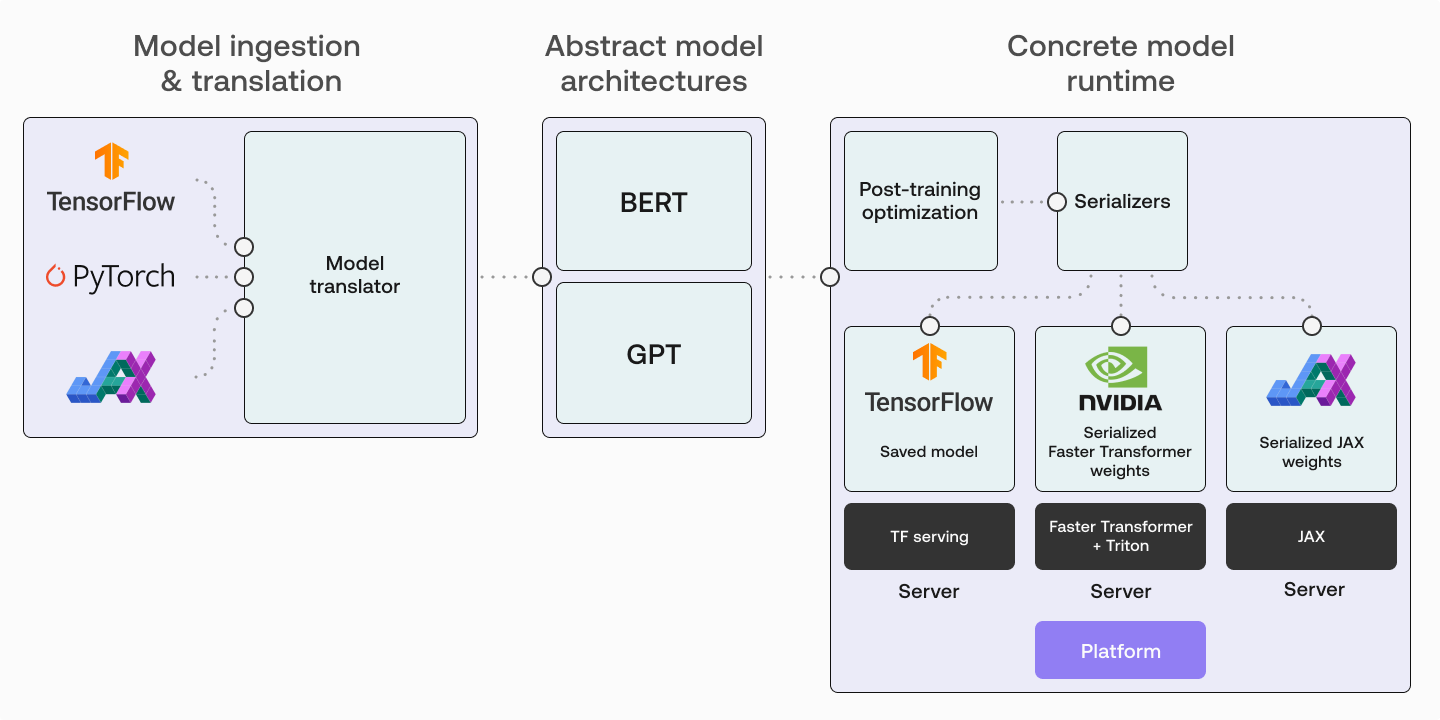

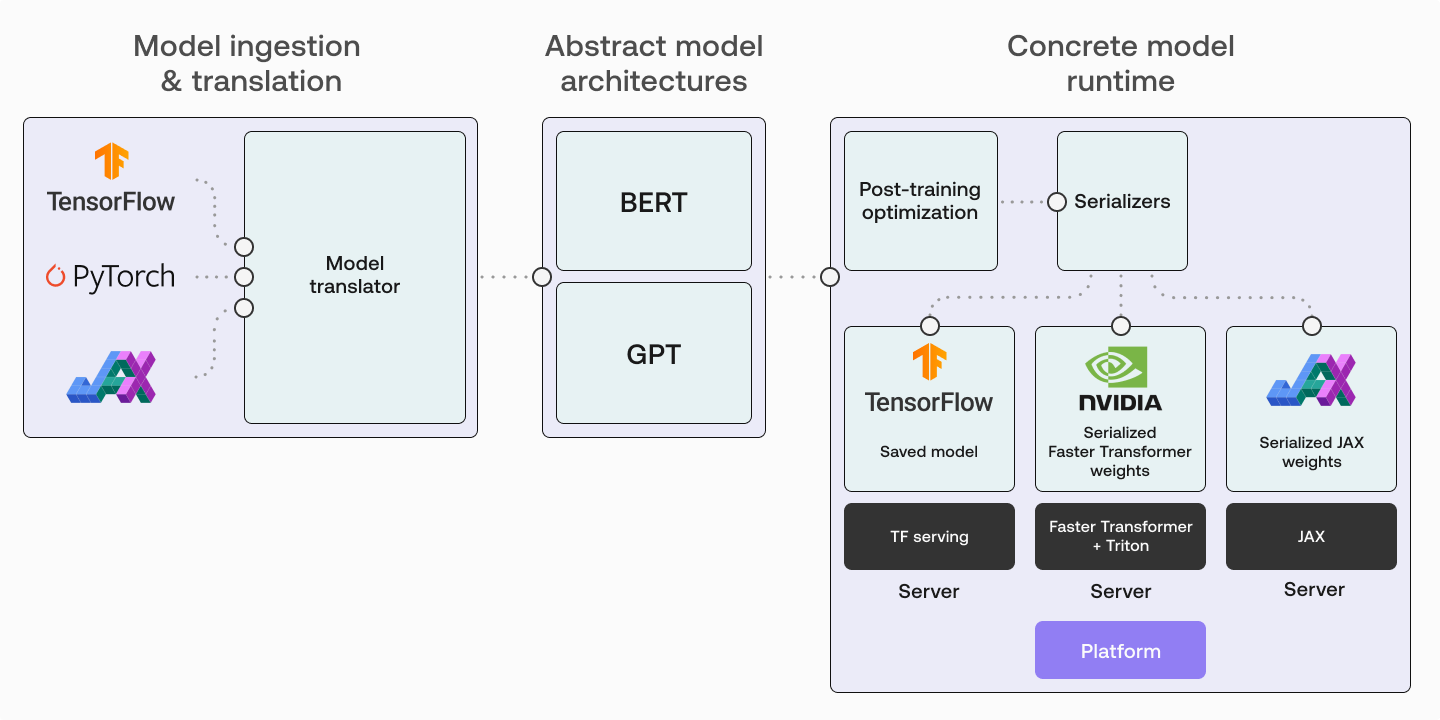

Running Large Language Models in Production: A look at The Inference Framework (TIF)

Share: